While many artists of all stripes fear the growing dominance of AI, some graphic designers have greeted it and made it their new creative partner. Here, writer Stan Cross asks six of these designers to show us how they made their own digital design tools and why—and most importantly, their outlook on how to use tech and keep the humanity in their work.

The belief in the arts’ ability to remain impervious to the advances of artificial intelligence and for human creativity to forever elude machines is rapidly waning. Generative art is no longer confined to inscrutable research papers, tech conference conjecture and the furtive experiments of early adopters, but accessible to anyone with an internet connection. Since their ascent into the mainstream in the last few years, AI tools have demonstrably and exponentially improved. Within a very short space of time, the much-derided nightmare fuel of embryonic image generators has progressed to a point by which it’s now possible for any casual user armed with little more than a few choice keywords to achieve convincing impersonations of humanity’s most celebrated artists, encompassing most every discipline related to image-making; from painting to illustration, graphic design, animation, filmmaking and photography.

The as-yet-unrealised commercial implications of generative art are evidently many and profound, leaving many understandably gravely worried about how it will affect their future income. One necessary corrective is to contend with its artistic implications. While off-the-shelf tools like MidJourney and Stable Diffusion allow their users to create credible enough forgeries of famous artists’ styles and works, they deprive them of the fundamental cognition and mastery of craft required to have ever created these works in the first place.

A handful of text prompts may now be all that’s required to produce technically competent designs and aesthetically pleasing images, but if you outsource the majority of your creative decision-making to black box tools whose internal workings and algorithms remain opaque, you are necessarily abstracted from the process. Such a piece of software is not trying to express itself or when it generates a result, more than it is trying to find some median output dictated by whichever visual data it’s been trained on and been told to give primacy to. For me, creative expression requires a greater degree of human input, intent and authorship. This is what charges it with meaning.

The collaboration between human and machine becomes more like a duet, with AI elevated beyond a mere parlor trick novelty.

This is not to say, however, that generative processes cannot be reconciled with and harnessed by these qualities. We do not have to submit ourselves to some notion that humans have been supplanted by AI art, particularly given generative tools themselves began life as lines of code authored by humans, and accordingly, their results are influenced by their engineers’ human biases. To build your own generative design tool, then, is to take advantage of that simple truth.

By inserting yourself in the inception, construction and refinement of such a tool, you retain a far greater degree of creative autonomy than closed mass-market systems allow. In proactively defining parameters and formal constraints which keep the algorithm steered towards an intended vision, you are no longer reduced to the role of passive observer or beta tester, reliant on Pictionary-style interpretive guesswork to produce satisfying outcomes. The collaboration between human and machine becomes more like a duet, with AI elevated beyond a mere parlor trick novelty, into a craft.

WePresent spoke to six artists who have integrated self-made tools into their practice, and whose work best epitomizes this burgeoning, hybrid form of creativity.

Matt DesLauriers

He didn’t realize it at the time, but tinkering with the MS-DOS video games of his childhood would give Matt DesLauriers a grounding in writing scripts that would later become a boon in a career in web development. In turn, that career taught him he could be an artist. He tells me an epiphany came while making a sketch using Javascript for a laugh. “I looked at it and I was like; holy shit, this is like an artwork I’ve just made with my code.”

He now combines his twin disciplines to create artworks through code, which feature a heavy fascination with landscapes and terrain, inherited from growing up alongside Canada’s mountains. “Meridian” turns geological illustration into a rich, pseudo-handcrafted tapestry, while the undulating hillsides and mountain ranges of “Subscapes” are redolent of Peter Saville’s seminal “Unknown Pleasures” album artwork transposed to isometric angles.

Like most of DesLauriers’ work, these projects were created through bespoke programs written in Javascript, using randomness within delineated rules to produce its results of controlled chaos, akin to the entropy of the natural world—something he’s exploring in an upcoming project concerned with simulating hydraulic erosion.

“There’s something really interesting in the idea of actually using some of the real physical properties of how these erosion systems work. The more you learn about them, the more you can apply them. But then instead of just simulating it for real, you can tweak the algorithm, break it down and create something new,” he says, adding, “When you’re building your own tools like this, you have the freedom to play around with it.”

DesLauriers is a big believer in the public domain, sharing hundreds of his tools on his GitHub, particularly with regard to longevity. “If you rely too much on these systems that are closed source or privately owned, in ten years’ time, maybe the system’s not running anymore or I don’t have access to it. But if it's an open-source system, it’s very likely that you can still get it working.”

Sofia Crespo

Sofia Crespo’s father was a sea captain. “When I was little, he used to travel for many months, and then he came back and always told me these stories about the sea, and brought things from other harbors where he’d stopped. I was always really looking forward to that moment,” she recalls.

From that point on, she was gripped by the sea and natural habitats, and how many still remain unexplored and undocumented. Her work is a fusion between art, creative technologies and biological study, creating and cataloging sprawling, mesmerizing taxonomies of artificial lifeforms.

Visually, it falls somewhere between the ornate scientific illustration of 19th-century artists John James Audubon and Maria Sibylla Merian, a healthy dose of inspiration from Luigi Serafini’s surreal encyclopedia of an imaginary world “Codex Seraphinianus” and a decidedly digital sensibility. Vaporwave by way of “The Origin of Species”.

Each project takes in different environs and subject matter (the aquatic world, endangered species, insects, birds and jellyfish). Crespo’s process involves amassing and training models on real-world data (taking care to only include material from sources she has been granted permission to use, in contrast to many mainstream generative tools), ranging from photographs, illustrations, sounds, 3D models to raw statistics, depending on intended outcomes. From there, she can generate thousands of variations, until patterns begin to emerge, and her virtual chimera look near-convincing to the untrained eye.

Her first experiments with AI tools “felt like a magical black box.” She didn’t understand what was happening behind the scenes, just that her inputs were leading to outputs. “This element of magic faded away as I started to see it more as a software…to really see the limitations.” It’s a demystification that lends “a lot more power to decide what the model is going to be trained on. Like, of course it's a lot more work, but it makes it feel more mine now.”

“I find it really stimulating having to design a system, having all the pieces of the puzzle come together,” she concludes.

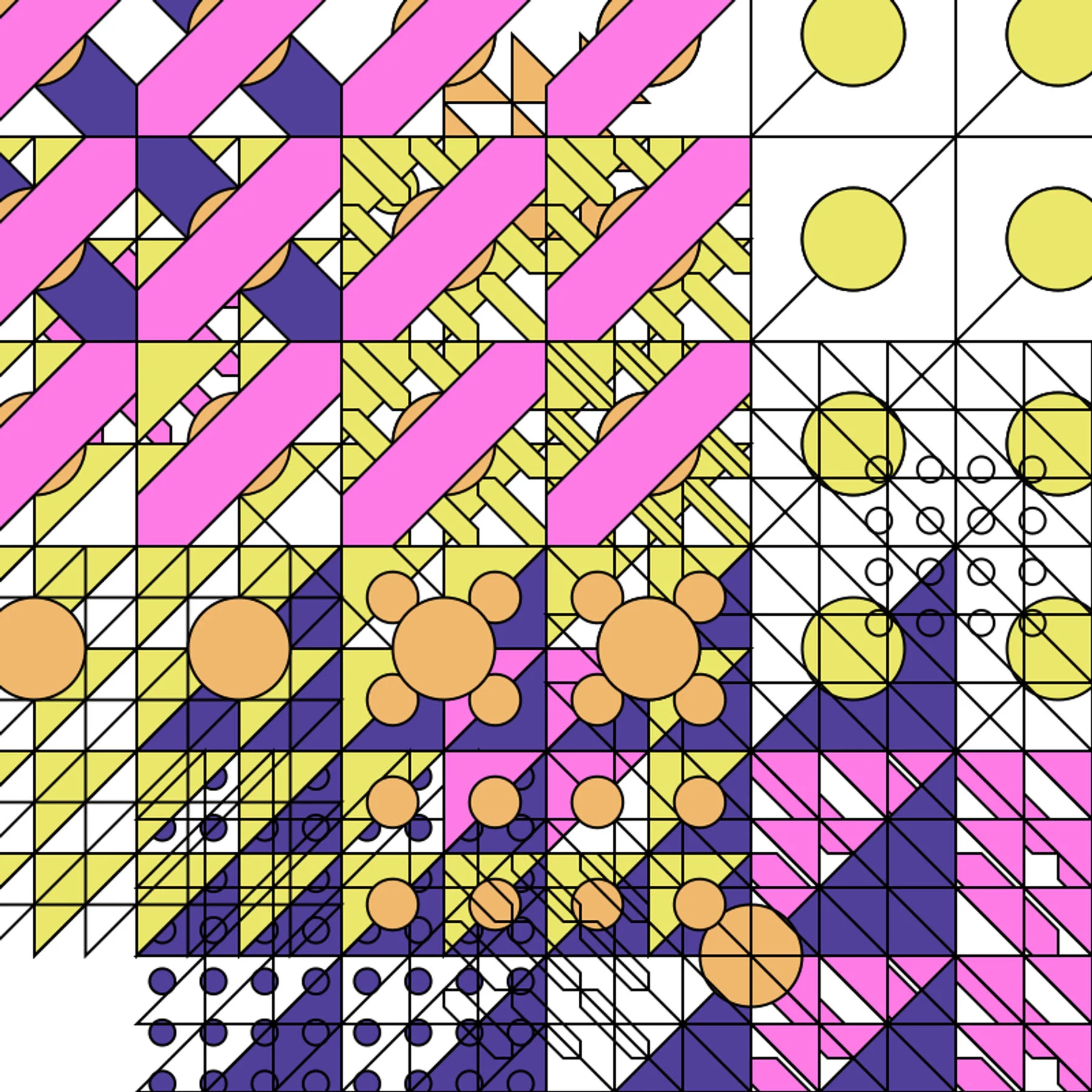

Saskia Freeke

In 2015, Saskia Freeke found an antidote to perfectionism: a month-long project requiring her to create one piece of generative art every day. She had to be strict with herself, to push any worries that the daily output wasn’t good enough out of her head and persevere. She’s been doing it ever since.

“On the one hand, it is kind of like work, to be continuously sketching,” she tells me. “Learning with the systems, trying to explore new things. But it’s also now, after so many years, more like meditation.”

Being aware of the rules so that you can sometimes break them is, I think, needed if you are being creative.

Freeke creates her artwork with code—often using Processing—and the ensuing designs document the incremental refinement of an artistic process and of growing and waning fascinations. The work is sometimes animated, but almost always variants on a theme within grids and alignments. “I have many rules like: which color do you pick? How many shapes?” The grids here become a sort of visual manifestation of coding constraints and frameworks. “Being aware of these rules so that you can sometimes break them is, I think, needed if you are being creative.”

As a parallel, she observes an interesting intergenerational difference between those who grew up “collaborating” with technology and those who grew up “consuming” it.

“Social media is a lot of consuming. I think knowing what’s behind it is important. It’s often just a black box you are using. How does it work? What’s behind Instagram’s algorithms? Why am I seeing this image or this video instead of something else?” she muses. “I think having an idea of all those rules and structures yourself is less consuming, and more using it knowing they’re there. I think that’s important for everyone to understand.”

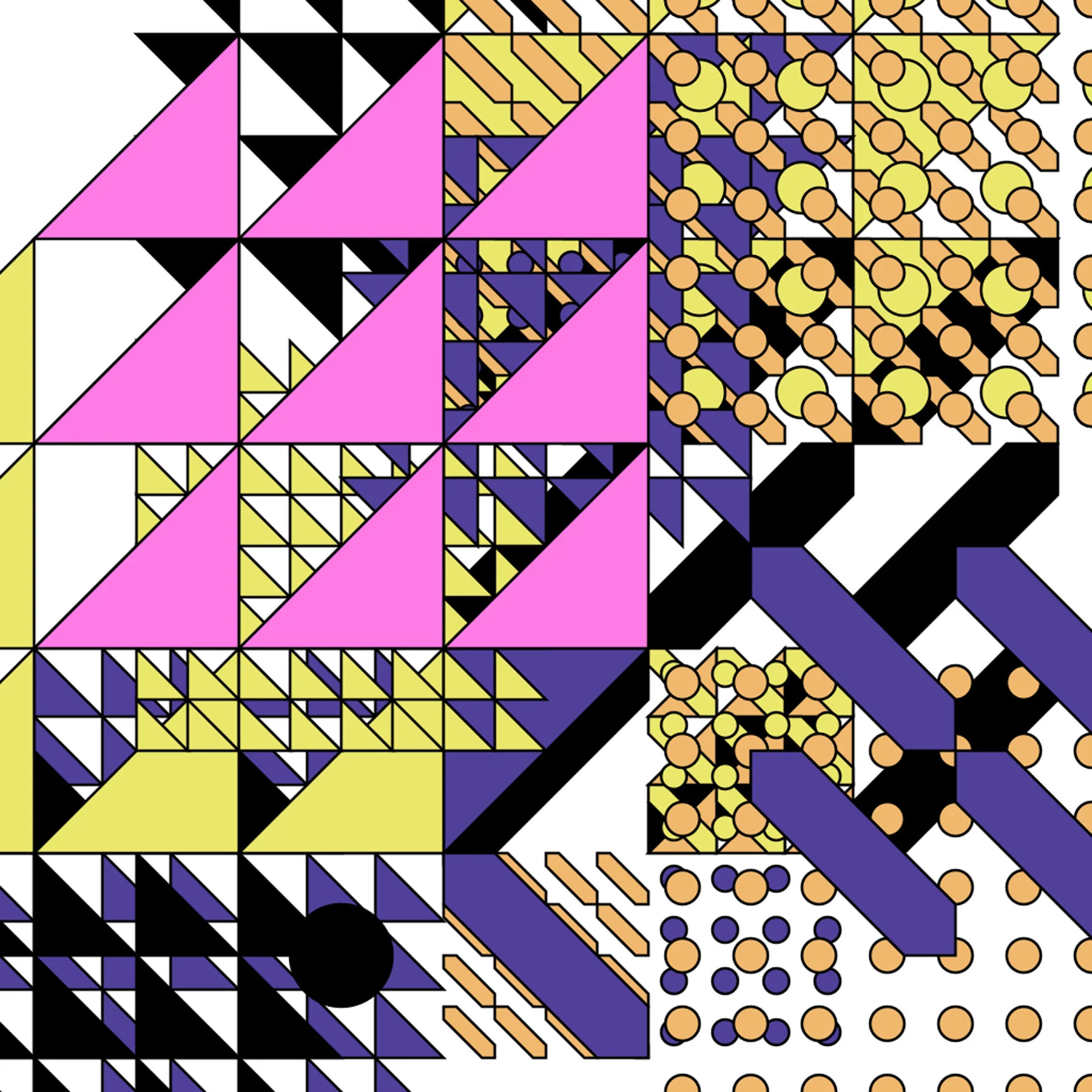

Raven Kwok

“I would say I find beauty in something systematically complex and delicate, but visually minimalist,” Raven Kwok (aka Guo, Ruiwen) tells me. “Life-like features are also something that intrigues me, like a full progress of birth, growth, decay and death. I tried to embody these elements in many of my past works.”

His work straddles numerous disciplines—animation, music videos—and is always kinetic, simulating interactions between algorithmically generated elements; particle systems, fractals, Voronoi tessellation and cellular automata.

In 1194D, we experience something close to the sequences legendary special effects artist Douglas Trumbull created for 2001, A Space Odyssey and The Tree of Life. A generative universe of triangles explodes into existence and reproduces itself many times over through subdivisions, its ecosystem of “algorithmic creatures” struggling for air, warping, distorting and eventually collapsing into nothingness.

He begins projects with a period of “self-initiated R&D”, studying research papers to find rules and principles to integrate into his work, before executing it “more like an engineer”, so as to consciously avoid surrendering artistic control over the process. “I don’t find it convincing when an ‘artist’ claims authorship of a piece in which he or she provides little input or effort,” he says.

“What matters to me is still the uniqueness of an artist in their creative expression. Even if they employ AI tools, their work would stand out from works by others using the same AI tool. This remains constant throughout history. I believe it will still do in the future.”

David Benski

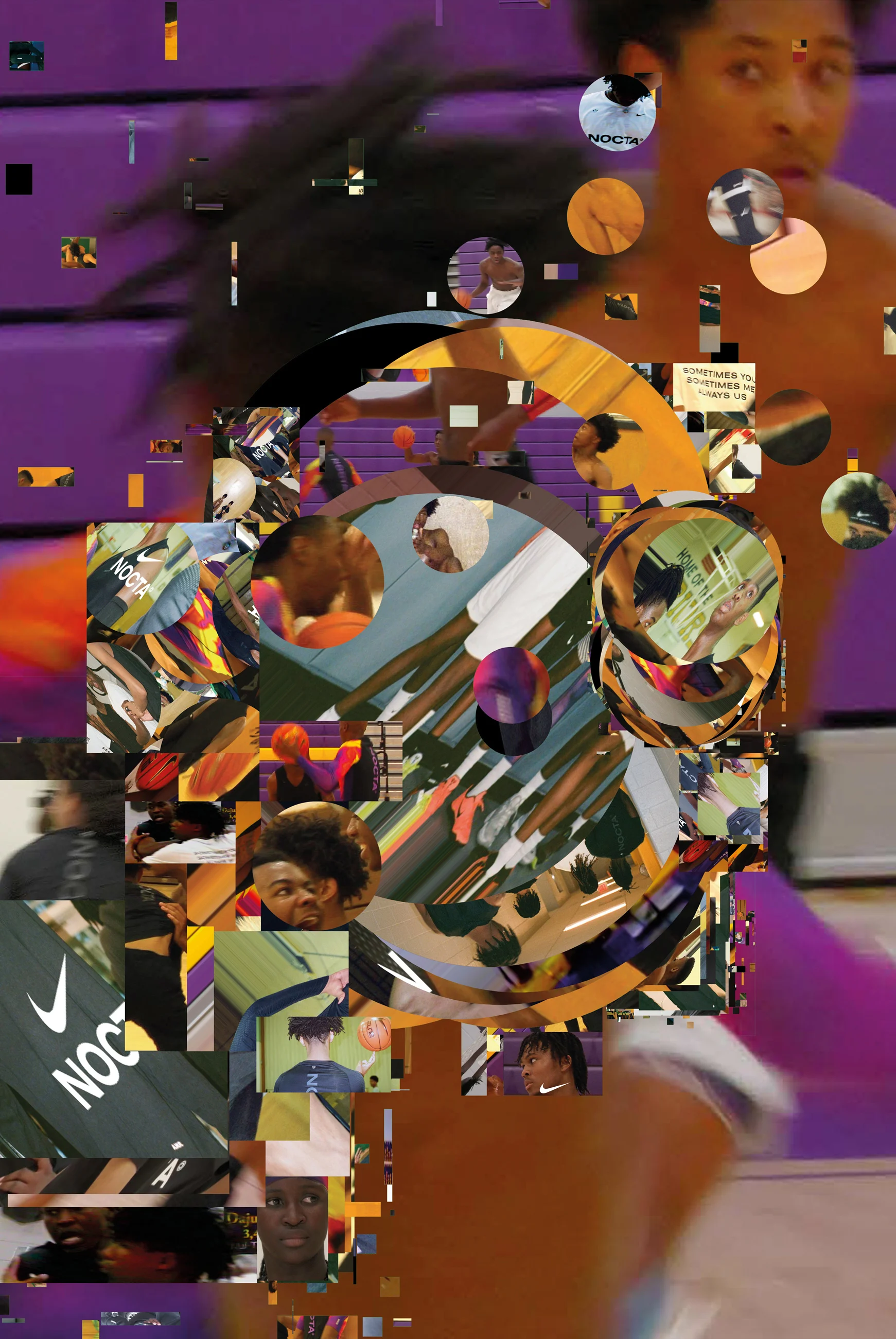

“I found immense fascination in the idea that something, following given instructions, could generate a multitude of iterations until I deemed it suitable to stop.” This was the genus of Berlin-based graphic designer David Benski’s collage processing tool, which he initially intended as a “virtual slot machine” able to throw up all manner of unexpected visual juxtapositions. It’s since become a staple of his practice, his favorite execution thus far coming as part of a series of collaborative projects with Nike.

The tool’s earliest guise sought to access Google’s Image results, extract sections of images and position them in arbitrary sizes over a canvas. Though the initial results were enjoyably precisely for being governed by chance, he gradually found he “yearned for greater precision and control”.

This led to several iterations, with Benski taking manual control over the image collation process, and working with collaborators to incorporate probabilistic factors aligned with his intent. The tool homages the cut-and-paste aesthetic of DIY zines, whose charm lies in their carefully crafted contrasts and imperfections. “I believe that a substantial portion of artistic control resides in the process of developing the tool itself. Decisions related to its capabilities, functionalities and user interaction significantly shape the creative outcome.”

Benski isn’t necessarily down on the prospect of AI’s advances in the world of design, but he is wary of algorithmic optimisation resulting in “more straightforward” aesthetics that lose their capacity to surprise.

In this context, he believes “crafting and utilizing one’s own tools serves as a potent strategy,” one which would have the twin benefit of “effectively crystallizing your individual approach to a concept.”

“Envision crafting your own version of Indesign or Photoshop, even if it might not reach the same level of refinement,” he says. “Despite its potential imperfections, it would undeniably encapsulate your distinct perspective, resulting in a truly unique creation.”

Carolina Melis

After spending 20 years in animation and illustration with fixed processes and workflows, Carolina Melis began feeling the urge to introduce an element of chance, to produce “an ecosystem rather than one-off pieces of art.”

The result is Kubikino, a series of generatively created portraits—a “community of faces” informed by 18 months of research and the study of avatars and masks from different cultures and eras—comprised entirely of geometric shapes, using a vivid but deliberately limited color palette. “The true challenge was maintaining minimalism. Complicating things is easy; simplifying is difficult.”

As paradoxical as she admits it may seem, the computer is an artistic partner, not a usurper.

The move to a generative approach hasn’t represented a complete rupture from Melis’ prior practice, rather, it has been assimilated and incorporated into it. Before devising the coding rules that would govern how the faces would be generated, she invested significant time sketching on paper and “delving into familiar fields like graphic design, choreography and animation.” She tells me AI has given her a different means of perceiving creativity. “There is a stage between the idea and the execution, which involves re-creation or generation, facilitated by the machine.”

To Melis, as paradoxical as she admits it may seem, the computer is an artistic partner, not a usurper. For her, the collaborative aspect is in the human-designed rules and instructions that dictate the machine’s improvisations. “Consider planting a seed. If it’s a rose seed, you have a general idea of what to expect. However, a specific set of conditions will determine the exact appearance and characteristics of the rose that will bloom from that seed.”

Continuing this metaphor, she finishes: “Generative art is like the life cycle of a garden, whereas traditional art is a painting of a fully bloomed flower.”